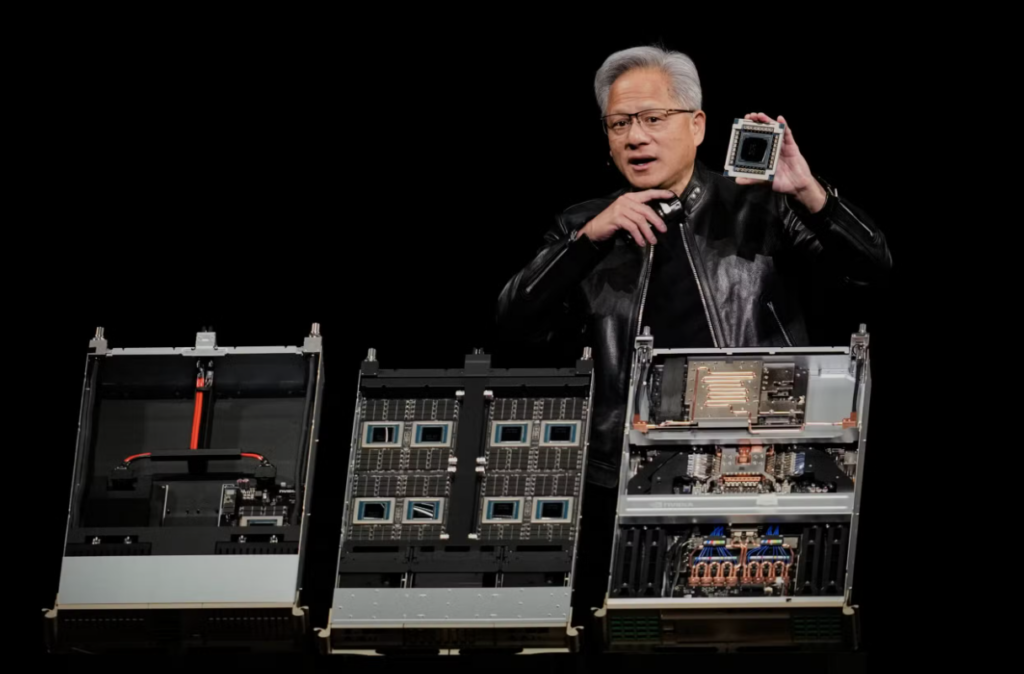

SAN JOSE, California — At the forefront of the global technology shift, NVIDIA CEO Jensen Huang has declared the arrival of the “inference inflection point,” signaling a major transition in the artificial intelligence landscape. Speaking at a recent industry summit, Huang emphasized that while the first phase of the AI boom was defined by “training”—teaching models how to understand and generate data—the next massive wave of growth will come from “inference,” or the actual deployment and scaling of these models in real-world applications.

This shift marks a critical evolution for the semiconductor giant and the broader tech economy. Inference occurs every time an AI model is used to answer a query, generate code, or analyze medical images. As AI becomes integrated into every layer of software and hardware, the demand for chips capable of running these models efficiently and at scale is expected to outpace the initial demand for training hardware.

“We are at the beginning of a new industrial revolution,” Huang stated. “The transition from training to inference is where the true value of AI is unlocked for businesses and consumers alike. It is no longer just about building the brain; it is about putting that brain to work in every factory, every car, and every smartphone in the world.”

To capitalize on this inflection point, NVIDIA is rolling out new architectures specifically optimized for high-speed, low-latency inference. These advancements are designed to handle the massive computational loads required for real-time generative AI while reducing power consumption—a major concern for data center operators worldwide.

Industry analysts suggest that this phase will broaden the AI market beyond a few tech giants. As inference costs drop, smaller enterprises and developers will be able to deploy sophisticated AI agents for specialized tasks, ranging from localized weather forecasting to personalized digital assistants.

The “inference inflection” also carries significant implications for global supply chains. With the demand for specialized AI silicon reaching new heights, manufacturers are racing to expand capacity. For emerging tech hubs and digital economies, this represents a pivotal moment to integrate into the AI value chain, whether through software development or data center infrastructure.

As NVIDIA continues to shatter market expectations, Huang’s vision suggests that the AI era is moving away from the laboratory and into the infrastructure of daily life, fundamentally changing how humanity interacts with technology.